How Federal Agencies Are Using AI to Transform Public Services

Every year, the federal government processes hundreds of millions of benefit claims, passport applications, tax filings, veteran service requests, and agency inquiries. For decades, the infrastructure behind these services has been a patchwork of legacy systems, paper-based workflows, and siloed databases many of them older than the internet itself.

The result? Citizens waiting weeks for benefits they needed yesterday. Federal employees buried under manual data entry. Security vulnerabilities sitting inside codebases that predate modern cybersecurity frameworks. According to a 2024 Government Accountability Office (GAO) report, 10 of the federal government’s most critical IT legacy systems are between 8 and 51 years old and collectively cost taxpayers over $337 million per year just to maintain.

But something significant is shifting. A convergence of large language models, agentic AI frameworks, cloud-native infrastructure, and intelligent automation is giving federal agencies unprecedented capability to modernize not in years, but in months.

This isn’t a futuristic conversation. It’s happening now. And for federal technology leaders, the agencies moving fastest are not the ones with the biggest budgets they’re the ones with the clearest strategy.

This post breaks down exactly how federal government digital transformation is unfolding through AI, what’s working, what’s failing, and what your agency should be doing next.

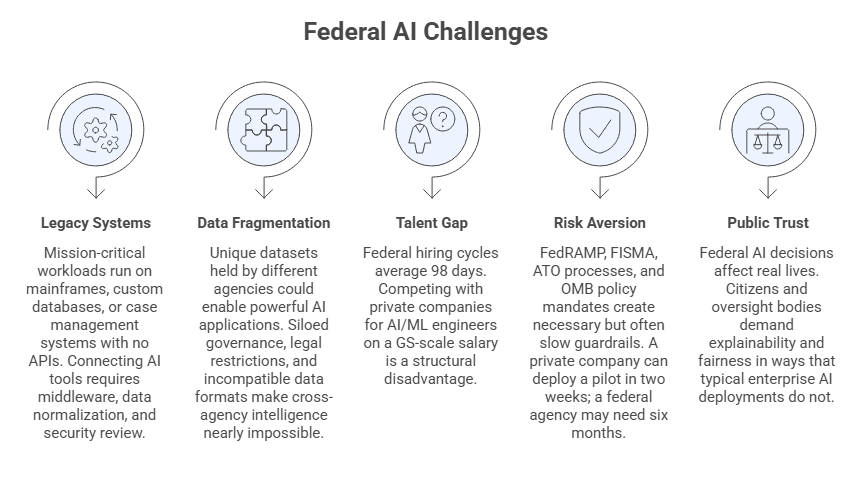

Key Challenges Facing Federal Agencies in the AI Era

Before diving into solutions, it’s critical to understand why federal AI adoption is so uniquely difficult. These aren’t the same barriers a private-sector enterprise faces.

1. Legacy Systems That Resist Integration

Many agencies run mission-critical workloads on COBOL-based mainframes, custom 1980s databases, or early-2000s case management systems with no APIs. Connecting AI tools to these systems requires middleware, data normalization, and extensive security review adding time and cost before a single model is trained.

2. Data Fragmentation Across Agencies

The FBI, DHS, SSA, VA, and IRS each hold unique datasets that could collectively enable powerful AI applications but siloed governance, legal restrictions, and incompatible data formats make cross-agency intelligence nearly impossible without deliberate architecture.

3. The Talent Gap

Federal hiring cycles average 98 days. Competing with Amazon, Google, and Palantir for AI/ML engineers on a GS-scale salary is a structural disadvantage that agencies must work around, not through.

4. Risk Aversion and Compliance Overhead

FedRAMP, FISMA, ATO processes, and OMB policy mandates create necessary but often slow guardrails. A private company can deploy a pilot in two weeks; a federal agency may need six months of security review for the same workload.

5. Public Trust and Explainability

Federal AI decisions affect real lives benefits determinations, law enforcement flagging, immigration processing. Citizens and oversight bodies demand explainability and fairness in ways that typical enterprise AI deployments do not. “Black box” models are politically and legally untenable.

Emerging Tech Trends Solving the Federal AI Problem

Despite these challenges, government technology solutions have evolved dramatically. Several trends are converging to make federal AI not just viable, but transformative.

Trend 1: Agentic AI for Complex Workflow Automation

Agentic AI systems that can plan, reason, execute multi-step tasks, and self-correct is moving beyond chatbot-level interaction. Federal agencies are beginning to deploy AI agents that can independently navigate case files, trigger downstream workflows, query databases, and escalate exceptions to human reviewers. Understanding which AI is better for public services has become a strategic priority for CIOs and CTOs across the federal landscape.

Trend 2: Generative AI for Knowledge Management and Citizen Engagement

Large language model-based applications are being embedded into agency knowledge portals, internal help desks, and citizen-facing chatbots. Agencies like the SSA and USCIS are piloting generative AI tools to help caseworkers find relevant regulations, draft correspondence, and summarize case histories. The generative AI benefits in government are significant but so are the risks, particularly around hallucination, data leakage, and procurement compliance.

Trend 3: AI-Powered Identity and Access Management

Physical and digital identity verification has long been a bottleneck across federal services from veteran benefit onboarding to contractor credentialing. Modern AI-powered identity verification solutions use computer vision, behavioral biometrics, and document authentication models to verify identities in seconds rather than days, dramatically reducing fraud and wait times.

Trend 4: Federated Learning and Privacy-Preserving AI

Agencies can now train shared AI models on distributed data without centralizing sensitive PII a breakthrough for cross-agency collaboration. Federated learning allows the IRS and Treasury, or the VA and DoD, to develop joint fraud detection or health models without exposing underlying datasets.

Trend 5: AI-Augmented Cybersecurity

With over 30,000 cyberattacks targeting federal systems daily, agencies are deploying AI-driven security operations centers (SOCs) capable of correlating threat signals across networks in real time far beyond the capacity of any human analyst team.

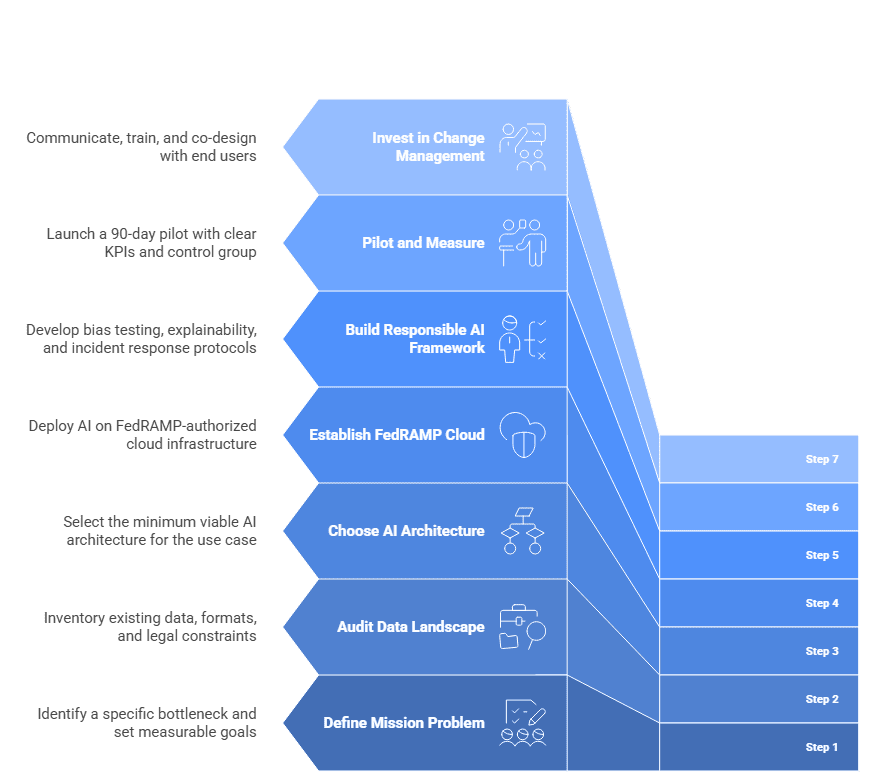

Step-by-Step: How Federal Agencies Can Deploy AI Effectively

Successful federal AI programs don’t happen by accident. They follow a disciplined, phased approach that balances speed with governance.

Step 1 — Define the Mission Problem, Not the Technology Solution

Too many federal AI initiatives start with “we need an AI” rather than “we have this specific bottleneck.” Start with a concrete problem: Benefits processing takes 47 days and has a 23% error rate. How do we reduce both by at least 50%? Metrics-first thinking prevents technology theater and ensures ROI is measurable.

Step 2 — Audit Your Data Landscape Before Building Anything

AI is only as powerful as the data it learns from. Conduct a thorough data inventory: What data exists? Where is it stored? What format is it in? What can legally be used to train a model? What retention policies apply? Agencies that skip this step routinely deploy AI systems that fail in production because of data quality problems that were entirely foreseeable.

Step 3 — Choose the Right AI Architecture for the Use Case

Not all AI is equal. A rules-based decision tree may outperform a neural network for a narrow eligibility determination. A retrieval-augmented generation (RAG) system may serve a knowledge worker better than a fine-tuned model. Agentic frameworks add power but also complexity and risk. Map your use case to the minimum viable AI architecture.

Step 4 — Establish a FedRAMP-Authorized Cloud Foundation First

Deploying AI on unauthorized infrastructure is not an option. Ensure your cloud environment is FedRAMP Moderate or High authorized, depending on data sensitivity. Platforms like AWS GovCloud, Azure Government, and Google Public Sector provide FedRAMP-authorized environments with AI/ML services included.

Step 5 — Build a Responsible AI Framework Before Launch

Before any model touches a production workflow, establish: a bias testing protocol, an explainability standard (especially for adverse decisions), a human-in-the-loop requirement for high-stakes outputs, and an incident response plan for model failures. This is not optional red tape it is your legal and ethical obligation.

Step 6 — Pilot Small, Measure Relentlessly, Scale with Evidence

Launch a 90-day pilot with a defined cohort of users, a control group, and clear KPIs. Avoid the temptation to scale prematurely. Federal AI programs that fail publicly almost always failed quietly first and were scaled without adequate validation.

Step 7 — Invest in Change Management Alongside the Technology

Federal employees are not passive recipients of AI tools. They are practitioners who will adopt or resist based on trust, training, and perceived threat to their roles. Agencies that invest in workforce communication, AI literacy training, and co-design with end users see dramatically higher adoption rates and better model performance from human feedback loops.

Real-World Use Cases: Federal AI in Action

The Department of Veterans Affairs (VA): Faster Claims, Better Outcomes

The VA processes approximately 1.5 million disability claims annually. Historically, average processing time exceeded 100 days. Through AI-assisted claims processing using NLP to extract diagnostic codes from medical records and machine learning to flag claims straightforward claims while surfacing complex cases for specialist attention faster.

The system doesn’t replace claims processors. It pre-populates data fields, highlights documentation gaps, and ranks evidence relevance compressing hours of manual review into minutes. The human decision remains; the AI eliminates the administrative burden around it.

The IRS: AI-Driven Audit Selection and Fraud Detection

The IRS deployed AI models to analyze tax return patterns across millions of filings, identifying statistical anomalies consistent with fraudulent activity with far greater precision than rules-based legacy systems. The result: higher audit yield rates (catching more actual fraud per audit conducted) and a reduction in false positives that had historically burdened compliant taxpayers.

Critically, the IRS built explainability requirements into the model selection criteria every audit flag must trace to a human-readable audit trail, ensuring constitutional due process protections remain intact.

USCIS: AI for Immigration Document Processing

U.S. Citizenship and Immigration Services tested AI-powered document analysis tools capable of reading and classifying immigration forms, identifying inconsistencies across supporting documents, and flagging potential fraud indicators. What previously required trained adjudicators spending significant time on document review was accelerated dramatically, freeing those professionals to focus on judgment-intensive aspects of case decisions.

The Social Security Administration (SSA): Conversational AI for Citizen Services

The SSA handles millions of public inquiries monthly. Deploying a generative AI-powered virtual assistant trained on SSA policy documentation and FAQ databases allowed the agency to resolve a significant share of routine inquiries benefit status, eligibility questions, application guidance without queue wait times. The system escalates to a human agent for complex or sensitive situations and maintains a full conversation transcript for continuity.

Department of Defense (DoD): AI in Cybersecurity Operations

DARPA and CISA have both advanced AI-driven anomaly detection across military and civilian federal networks. Machine learning models trained on network traffic baselines can now identify lateral movement patterns, unusual authentication sequences, and data exfiltration signatures in near-real time flagging threats that would never surface in manual log review.

Best Practices & Expert Tips for Federal AI Leaders

Treat AI Governance as a Product, Not a Policy Document. Static AI ethics policies become outdated the moment they’re published. The most effective agencies treat AI governance as a living product with version control, regular review cycles, and dedicated ownership.

Prioritize Use Cases with High Volume and Low Ambiguity First. The fastest wins in federal AI come from automating decisions that are high volume, well-defined, and currently consuming disproportionate human time. Leave the ambiguous, high-stakes decisions for later, when your governance framework is mature.

Embed AI Expertise Inside Program Teams. Centralizing all AI capability in a standalone AI office creates bottlenecks and reduces program relevance. The best-performing federal agencies are distributing AI competency into mission program teams while the central office focuses on standards, risk management, and procurement vehicles.

Use Modular Procurement Vehicles. Traditional federal IT procurement cycles are too slow for AI development cycles. Use OTAs (Other Transaction Agreements), BPAs, and IDIQ vehicles with modular task orders to maintain agility while staying compliant.

Build for Explainability from Day One. Retrofitting explainability into a deployed model is expensive and often architecturally impossible. Make it a design requirement, not an afterthought.

Don’t Overlook the Downstream Data Pipeline. AI models degrade when input data quality drifts. Build monitoring for data pipeline health not just model performance metrics into your operations plan.

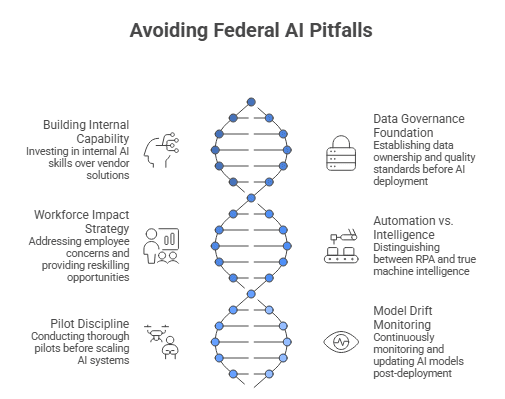

Common Mistakes Federal Agencies Must Avoid

- Mistake 1: Buying AI as a Product Rather Than Building a Capability Vendors selling “AI-in-a-box” solutions rarely survive contact with the specificity of federal mission requirements. Agencies that invest in building internal AI capability even through embedded contractor support consistently outperform those that outsource the thinking.

- Mistake 2: Launching Without a Data Governance Foundation Deploying AI on poorly governed data doesn’t just produce bad results it produces confidently wrong results at scale. Establish data ownership, quality standards, and lineage tracking before you train anything.

- Mistake 3: Underestimating the Workforce Impact Announcing AI deployment without a workforce strategy guarantees resistance. Employees who feel threatened by AI become the single biggest obstacle to adoption. Transparency about what AI will and won’t replace combined with genuine reskilling investment is non-negotiable.

- Mistake 4: Conflating Automation with Intelligence Many “AI” federal projects are really robotic process automation (RPA) projects in disguise valuable, but limited. Understand the distinction between automating a defined process and actually applying machine intelligence to unstructured, variable data. They require different architectures, teams, and governance models.

- Mistake 5: Skipping the Pilot and Going Straight to Scale The pressure to show results quickly is real in federal environments. But scaling an undervalidated AI system in a federal context can create mission failure, public controversy, and legal liability that takes years to recover from. Pilot discipline is not bureaucratic caution it is strategic risk management.

- Mistake 6: Ignoring Model Drift Post-Deployment A model trained on 2022 data may perform poorly on 2025 inputs as policy, language, and citizen behavior evolve. Federal agencies frequently forget that AI deployment is not a project with an end date it’s an ongoing operational commitment requiring continuous monitoring.

Conclusion: The Federal AI Window Is Open But Not Forever

The convergence of capable foundation models, cloud infrastructure, and federal AI policy frameworks has created an unprecedented window of opportunity. Agencies that act thoughtfully and decisively now will compound their capability advantage over the next five years. Those that wait for perfect conditions will find themselves perpetually behind.

The future of federal AI is not about replacing government employees with algorithms. It is about augmenting skilled public servants with tools that eliminate administrative friction, surface better information faster, and make every citizen interaction more accurate, equitable, and efficient.

Looking ahead to 2026 and beyond, several developments will reshape the federal AI landscape:

- Multi-agent systems will handle end-to-end case processing across agency boundaries, with humans supervising rather than executing workflows.

- AI-native procurement frameworks will emerge, replacing legacy FAR structures that weren’t designed for iterative software and model updates.

- Real-time, explainable AI will become a regulatory baseline, not a best practice, as oversight bodies like Congress and the GAO develop technical AI audit standards.

- Quantum-resistant AI security will become a federal mandate as quantum computing threats to encryption become operational realities.

- Citizen-facing personalization will evolve not into surveillance, but into intelligent service routing that connects individuals to the right benefit, program, or support the first time, every time.

The agencies that will lead this transformation are the ones building strategy, governance, and capability right now not waiting for the next administration’s AI executive order to tell them where to start.

Partner With Government App Maisters

Navigating AI in federal government requires more than technical capability it demands deep understanding of federal procurement, compliance frameworks, and mission-critical deployment realities.

Government App Maisters is a specialized technology partner built exclusively for federal agencies. From AI strategy and architecture to FedRAMP-authorized deployment and workforce enablement, the team at Government App Maisters has delivered proven results across civilian and defense agencies.

Whether you’re launching your first AI pilot, modernizing a legacy system, or scaling an existing AI program, Government App Maisters brings the federal expertise, technical depth, and delivery discipline your mission demands.

Ready to accelerate your agency’s AI transformation? Connect with the Government App Maisters team today to schedule a strategic assessment and discover what’s possible.

Frequently Asked Questions

How can AI improve student engagement?

AI makes learning personal and interactive. It adjusts lessons to each pupil’s level and gives instant feedback, so students stay interested. For instance, an AI quiz can increase difficulty as a student improves, keeping it challenging. It can also turn content into games or chatbots, making lessons feel fresh. Research shows this personalised approach boosts motivation and scores.

What are data-driven teaching tools?

These are classroom apps or systems that use student data (like quiz answers or time on tasks) to guide teaching. For example, an adaptive learning program tracks how well each child does in maths and then presents new problems to match their skill. Teachers use the data to see who needs help with which topics. In short, they use students’ own learning data to tailor lessons and improve engagement.

Can AI personalise learning for every student?

Yes. AI platforms continuously analyze student performance and tweak content in real time. If a child is struggling, the system offers extra practice or a different explanation. If a child excels, it moves them ahead. This means each student effectively has a custom lesson pace and level. Studies consistently show students are more engaged when learning is personalised to their needs.

Will AI replace teachers?

No. AI is a tool to help teachers, not replace them. Education experts emphasize that AI use should always be human-centered. AI handles routine tasks (like grading quizzes or generating practice problems) and provides insights, freeing teachers to focus on mentoring, discussion, and relationship-building. The best results happen when AI augments human teaching, not substitutes it.

Are AI education tools safe and easy to use?

Most AI tools designed for schools are user-friendly and require minimal setup. Teachers usually need some initial training, but many apps have clear guides and support. Safety comes from following school guidelines: use approved platforms, protect student privacy, and explain to students how data is used. When used responsibly, AI apps are straightforward and help students learn more effectively without adding extra burden.